- October 13, 2025

- Posted by: damian

- Category: Blog

Deepfakes have moved from novelty, to nuisance, to real business risk. Attackers are layering AI‑generated voice, video, and hyper‑real text onto classic social engineering to accelerate wire fraud, override access controls, and pressure teams into bad decisions. The goal isn’t cinematic perfection. It’s believable urgency at scale.

What’s happening right now

- Voice cloning on a budget: 30-60 seconds of clean audio can yield a passable voice model. Calls, voice notes, and IVR menus carry the payload.

- “Pass‑at‑a‑glance” video: Lip‑sync and face‑swap are convincing enough for short Zoom clips and quick “approvals.”

- Style‑matched text: Public posts and email leaks feed models that mimic executive tone and cadence.

- Multipliers: Deepfakes ride existing gaps such as weak verification, siloed comms, and “move fast” culture.

Business impact patterns we see

- Payments/procurement: “Urgent” wire or banking changes “from the CEO/vendor.”

- IT/security: “Temporary MFA bypass” or “new device enrollment” requests.

- Investor/PR: Fake statements that move markets and damage reputation.

- Admin impersonation: Realistic voices prompting password resets and privilege changes.

What works now: practical defenses

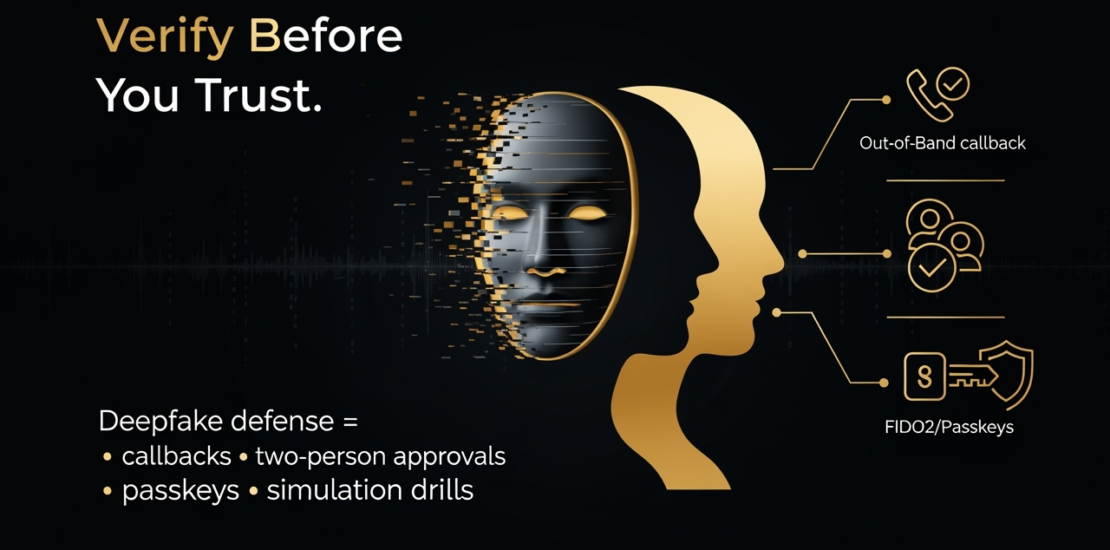

Verification rituals (policy beats pixel‑peeping)

- Out‑of‑band callbacks: For payment, credential, or access changes, require a call to a known number or a pre‑agreed phrase. No exceptions.

- Two‑person rule: Sensitive approvals require two independent verifiers from different functions (e.g., Finance + IT).

Harden identity and comms

- Phishing‑resistant MFA: Move admins/finance to passkeys/FIDO2; retire SMS for high‑risk users.

- Just‑in‑time access: Replace standing admin rights with time‑bound elevation; re‑auth for risky actions.

- Verification scripts: Give staff a short script for refusing/deferring suspicious requests without friction.

Manage your media footprint

- Limit clean voice samples: Share shorter clips with background audio; avoid long, studio‑clean uploads.

- Watermark official videos: Consistent lower‑thirds/branding; maintain an internal asset registry so comms can confirm authenticity quickly.

Train for modern social engineering

- Simulations: Run quarterly voice/video deepfake drills; include exec/vendor impersonation.

- Micro‑learning: 3-5 minute refreshers with “pause points” (e.g. what to ask, who to call).

- Incentives: Reward verified “no’s” as much as fast “yes’s”.

Incident playbook (make it muscle memory)

- Report path: Where to send suspected deepfakes; who leads IR/comms/legal.

- Containment: Freeze payment changes; rotate credentials; alert vendors/customers.

- Evidence: Preserve audio/video, headers, call metadata, and timestamps.

Tools landscape (representative, not endorsements)

Detection and verification

- Reality Defender, Hive Moderation: Commercial deepfake detection and risk scoring.

- Microsoft Video Authenticator, Intel FakeCatcher: Media analysis (enterprise/experimental).

- Pindrop, Nuance Gatekeeper: Voice integrity and call‑center fraud detection.

Training and simulation

- Adaptive Security: Next‑gen security training and AI attack simulations focused on deepfakes, vishing/smishing, and AI‑assisted email threats, helping teams practice verification under pressure.

- Cofense, KnowBe4: Phishing simulations/awareness; several are piloting AI‑deepfake modules.

Identity and comms hardening

- Passkeys/FIDO2 via Okta, Microsoft Entra, Duo.

- Privileged access/JIT via CyberArk, BeyondTrust, Delinea.

- Out‑of‑band verification via ITSM/ERP policies (ServiceNow/JSM; AP controls inside ERP).

Future outlook: where this is heading

- Cheaper, faster, more volume: Model quality will improve, but accessibility is the bigger shift; more attackers = more attempts.

- Context‑aware impersonation: Fakes will incorporate internal jargon, calendars, and org structure to sound “inside.”

- Real‑time conversion: Live voice‑morphing on calls and “attendance” by execs who never joined.

- Provenance grows up: Content credentials (C2PA), platform labels, and watermarking will help, but internal verification remains decisive.

- Defensive posture shift: The question becomes less “Is this real?” and more “What verification is required for this action?”

Checklist to deploy this week

- Payments: Enforce out‑of‑band callbacks + two‑person rule for bank/account changes.

- Identity: Move high‑risk users to FIDO2/passkeys; audit break‑glass accounts.

- Scripts: Issue a 3‑line “verify or decline” script; add a known callback number to email signatures.

- Training: Schedule a deepfake drill in the next 30 days (voice + video scenario).

- Comms: Publish official channels + media registry; designate a verification inbox/number.

- Vendors: Require MSPs and AP providers to adopt the same verification standards.

Bottom line

Deepfakes are less about pixels and more about pressure. Build deliberate friction where stakes are highest (think money movement, access, and reputation) and most deepfake attempts collapse into harmless noise.

Need a quick tune‑up for deepfake resilience? Book a 30‑minute session here, or reply for our Deepfake Response Checklist.

📧 Contact Us Today: marketing@disrisksolutions.com

🌐 Learn More: https://disrisksolutions.com

Prepare. Protect. Prevail.

#InsiderRisk #CyberInsurance #Compliance #CyberResilience #TalentManagement #DISRiskSolutions #ZeroDayIntelligence